Examples Gallery¶

A couple of examples to illustrate different implementation of dot product (see also sphinx-gallery).

Getting started¶

pytorch nightly build should be installed, see Start Locally.

git clone https://github.com/sdpython/experimental-experiment.git

pip install onnxruntime-gpu nvidia-ml-py

pip install -r requirements-dev.txt

export PYTHONPATH=$PYTHONPATH:<this folder>

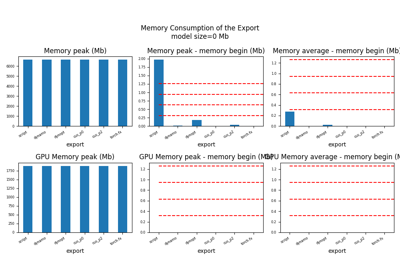

Compare torch exporters¶

The script evaluates the memory peak, the computation time of the exporters. It also compares the exported models when run through onnxruntime. The full script takes around 20 minutes to complete. It stores on disk all the graphs, the data used to draw them, and the models.

python _doc/examples/plot_torch_export.py -s large

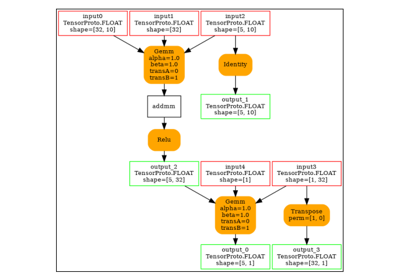

101: Onnx Model Optimization based on Pattern Rewriting

101: Onnx Model Optimization based on Pattern Rewriting

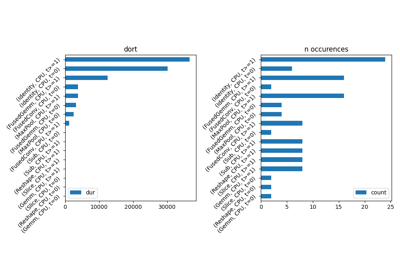

201: Evaluate different ways to export a torch model to ONNX

201: Evaluate different ways to export a torch model to ONNX

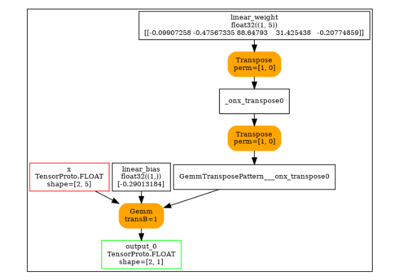

201: Use torch to export a scikit-learn model into ONNX

201: Use torch to export a scikit-learn model into ONNX