Note

Go to the end to download the full example code.

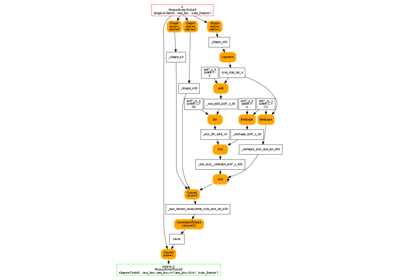

Export Phi-3.5-mini-instruct with report_exportability¶

Tries torch._export.tools.report_exportability().

Model¶

import pprint

from typing import Any, Dict

import torch

import torch._export.tools

import transformers

from onnx_diagnostic.helpers.cache_helper import make_dynamic_cache

from experimental_experiment.helpers import string_type

from onnx_diagnostic.torch_export_patches import register_additional_serialization_functions

def get_phi35_untrained(batch_size: int = 2, **kwargs) -> Dict[str, Any]:

"""

Gets a non initialized model with two sets of inputs and different shapes.

:param batch_size: batch size

:param kwargs: to overwrite the configuration, example ``num_hidden_layers=1``

:return: dictionary

See `Phi-3.5-mini-instruct/config.json

<https://huggingface.co/microsoft/Phi-3.5-mini-instruct/blob/main/config.json>`_.

"""

config = {

"_name_or_path": "Phi-3.5-mini-instruct",

"architectures": ["Phi3ForCausalLM"],

"attention_dropout": 0.0,

"auto_map": {

"AutoConfig": "configuration_phi3.Phi3Config",

"AutoModelForCausalLM": "modeling_phi3.Phi3ForCausalLM",

},

"bos_token_id": 1,

"embd_pdrop": 0.0,

"eos_token_id": 32000,

"hidden_act": "silu",

"hidden_size": 3072,

"initializer_range": 0.02,

"intermediate_size": 8192,

"max_position_embeddings": 131072,

"model_type": "phi3",

"num_attention_heads": 32,

"num_hidden_layers": 32,

"num_key_value_heads": 32,

"original_max_position_embeddings": 4096,

"pad_token_id": 32000,

"resid_pdrop": 0.0,

"rms_norm_eps": 1e-05,

"rope_scaling": {

"long_factor": [

1.0800000429153442,

1.1100000143051147,

1.1399999856948853,

1.340000033378601,

1.5899999141693115,

1.600000023841858,

1.6200000047683716,

2.620000123977661,

3.2300000190734863,

3.2300000190734863,

4.789999961853027,

7.400000095367432,

7.700000286102295,

9.09000015258789,

12.199999809265137,

17.670000076293945,

24.46000099182129,

28.57000160217285,

30.420001983642578,

30.840002059936523,

32.590003967285156,

32.93000411987305,

42.320003509521484,

44.96000289916992,

50.340003967285156,

50.45000457763672,

57.55000305175781,

57.93000411987305,

58.21000289916992,

60.1400032043457,

62.61000442504883,

62.62000274658203,

62.71000289916992,

63.1400032043457,

63.1400032043457,

63.77000427246094,

63.93000411987305,

63.96000289916992,

63.970001220703125,

64.02999877929688,

64.06999969482422,

64.08000183105469,

64.12000274658203,

64.41000366210938,

64.4800033569336,

64.51000213623047,

64.52999877929688,

64.83999633789062,

],

"short_factor": [

1.0,

1.0199999809265137,

1.0299999713897705,

1.0299999713897705,

1.0499999523162842,

1.0499999523162842,

1.0499999523162842,

1.0499999523162842,

1.0499999523162842,

1.0699999332427979,

1.0999999046325684,

1.1099998950958252,

1.1599998474121094,

1.1599998474121094,

1.1699998378753662,

1.2899998426437378,

1.339999794960022,

1.679999828338623,

1.7899998426437378,

1.8199998140335083,

1.8499997854232788,

1.8799997568130493,

1.9099997282028198,

1.9399996995925903,

1.9899996519088745,

2.0199997425079346,

2.0199997425079346,

2.0199997425079346,

2.0199997425079346,

2.0199997425079346,

2.0199997425079346,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0299997329711914,

2.0799996852874756,

2.0899996757507324,

2.189999580383301,

2.2199995517730713,

2.5899994373321533,

2.729999542236328,

2.749999523162842,

2.8399994373321533,

],

"type": "longrope",

},

"rope_theta": 10000.0,

"sliding_window": 262144,

"tie_word_embeddings": False,

"torch_dtype": "bfloat16",

"use_cache": True,

"attention_bias": False,

"vocab_size": 32064,

}

config.update(**kwargs)

conf = transformers.Phi3Config(**config)

model = transformers.Phi3ForCausalLM(conf)

model.eval()

cache = make_dynamic_cache(

[

(torch.randn(batch_size, 32, 30, 96), torch.randn(batch_size, 32, 30, 96))

for i in range(config["num_hidden_layers"])

]

)

cache2 = make_dynamic_cache(

[

(torch.randn(batch_size + 1, 32, 31, 96), torch.randn(batch_size + 1, 32, 31, 96))

for i in range(config["num_hidden_layers"])

]

)

inputs = dict(

input_ids=torch.randint(0, 32064, (batch_size, 3)).to(torch.int64),

attention_mask=torch.ones((batch_size, 33)).to(torch.int64),

past_key_values=cache,

)

inputs2 = dict(

input_ids=torch.randint(0, 32064, (batch_size + 1, 4)).to(torch.int64),

attention_mask=torch.ones((batch_size + 1, 35)).to(torch.int64),

past_key_values=cache2,

)

return dict(inputs=inputs, model=model, inputs2=inputs2)

data = get_phi35_untrained(num_hidden_layers=2)

model, inputs, inputs2 = data["model"], data["inputs"], data["inputs2"]

print(string_type(inputs, with_shape=True))

dict(input_ids:T7s2x3,attention_mask:T7s2x33,past_key_values:DynamicCache(key_cache=#2[T1s2x32x30x96,T1s2x32x30x96], value_cache=#2[T1s2x32x30x96,T1s2x32x30x96]))

Exportability¶

The function we want to try.

with register_additional_serialization_functions():

report = torch._export.tools.report_exportability(model, tuple(), kwargs=inputs, strict=False)

Let’s print the report.

{'': GuardOnDataDependentSymNode('Could not guard on data-dependent expression Eq(u0, 1) (unhinted: Eq(u0, 1)). (Size-like symbols: none)\n\nconsider using data-dependent friendly APIs such as guard_or_false, guard_or_true and statically_known_true.\nCaused by: (_subclasses/functional_tensor.py:332 in __bool__)\nFor more information, run with TORCH_LOGS="dynamic"\nFor extended logs when we create symbols, also add TORCHDYNAMO_EXTENDED_DEBUG_CREATE_SYMBOL="u0"\nIf you suspect the guard was triggered from C++, add TORCHDYNAMO_EXTENDED_DEBUG_CPP=1\nFor more debugging help, see https://docs.google.com/document/d/1HSuTTVvYH1pTew89Rtpeu84Ht3nQEFTYhAX3Ypa_xJs/edit?usp=sharing\n\nFor C++ stack trace, run with TORCHDYNAMO_EXTENDED_DEBUG_CPP=1'),

'model': GuardOnDataDependentSymNode('Could not guard on data-dependent expression Eq(u0, 1) (unhinted: Eq(u0, 1)). (Size-like symbols: none)\n\nconsider using data-dependent friendly APIs such as guard_or_false, guard_or_true and statically_known_true.\nCaused by: (_subclasses/functional_tensor.py:332 in __bool__)\nFor more information, run with TORCH_LOGS="dynamic"\nFor extended logs when we create symbols, also add TORCHDYNAMO_EXTENDED_DEBUG_CREATE_SYMBOL="u0"\nIf you suspect the guard was triggered from C++, add TORCHDYNAMO_EXTENDED_DEBUG_CPP=1\nFor more debugging help, see https://docs.google.com/document/d/1HSuTTVvYH1pTew89Rtpeu84Ht3nQEFTYhAX3Ypa_xJs/edit?usp=sharing\n\nFor C++ stack trace, run with TORCHDYNAMO_EXTENDED_DEBUG_CPP=1'),

'model.embed_tokens': None,

'model.layers.0': RuntimeError('Attempting to broadcast a dimension of length 33 at -1! Mismatching argument at index 1 had torch.Size([2, 1, 3, 33]); but expected shape should be broadcastable to [2, 32, 3, 36]'),

'model.layers.0.input_layernorm': None,

'model.layers.0.mlp': None,

'model.layers.0.resid_attn_dropout': None,

'model.layers.0.self_attn': RuntimeError('Attempting to broadcast a dimension of length 33 at -1! Mismatching argument at index 1 had torch.Size([2, 1, 3, 33]); but expected shape should be broadcastable to [2, 32, 3, 36]'),

'model.layers.0.self_attn.o_proj': None,

'model.rotary_emb': GuardOnDataDependentSymNode('Could not guard on data-dependent expression Eq(u0, 1) (unhinted: Eq(u0, 1)). (Size-like symbols: none)\n\nconsider using data-dependent friendly APIs such as guard_or_false, guard_or_true and statically_known_true.\nCaused by: (_subclasses/functional_tensor.py:332 in __bool__)\nFor more information, run with TORCH_LOGS="dynamic"\nFor extended logs when we create symbols, also add TORCHDYNAMO_EXTENDED_DEBUG_CREATE_SYMBOL="u0"\nIf you suspect the guard was triggered from C++, add TORCHDYNAMO_EXTENDED_DEBUG_CPP=1\nFor more debugging help, see https://docs.google.com/document/d/1HSuTTVvYH1pTew89Rtpeu84Ht3nQEFTYhAX3Ypa_xJs/edit?usp=sharing\n\nFor C++ stack trace, run with TORCHDYNAMO_EXTENDED_DEBUG_CPP=1')}

Total running time of the script: (0 minutes 6.447 seconds)

Related examples

to_onnx and padding one dimension to a mulitple of a constant

to_onnx and padding one dimension to a mulitple of a constant