Note

Go to the end to download the full example code.

TreeEnsemble optimization¶

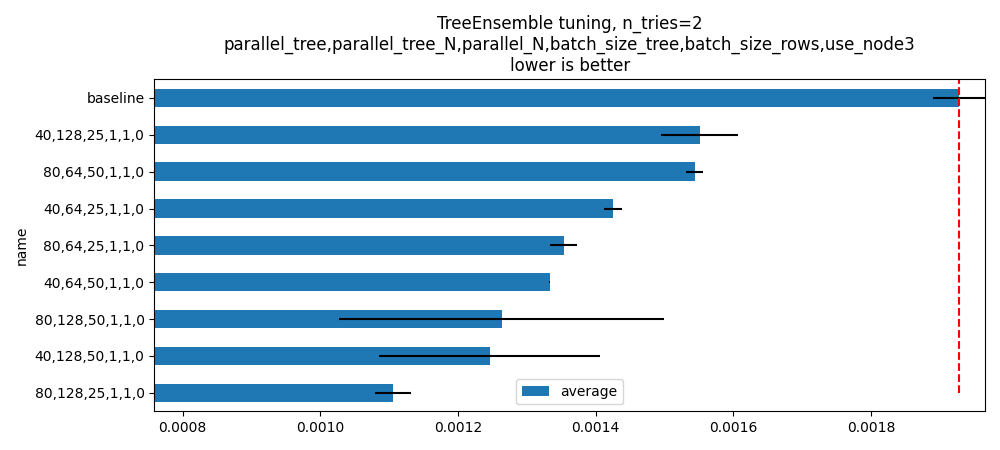

The execution of a TreeEnsembleRegressor can lead to very different results depending on how the computation is parallelized. By trees, by rows, by both, for only one row, for a short batch of rows, a longer one. The implementation in onnxruntime does not let the user changed the predetermined settings but a custom kernel might. That’s what this example is measuring.

The default set of optimized parameters is very short and is meant to be executed fast. Many more parameters can be tried.

python plot_op_tree_ensemble_optim --scenario=LONG

To change the training parameters:

python plot_op_tree_ensemble_optim.py

--n_trees=100

--max_depth=10

--n_features=50

--batch_size=100000

Another example with a full list of parameters:

- python plot_op_tree_ensemble_optim.py

–n_trees=100 –max_depth=10 –n_features=50 –batch_size=100000 –tries=3 –scenario=CUSTOM –parallel_tree=80,40 –parallel_tree_N=128,64 –parallel_N=50,25 –batch_size_tree=1,2 –batch_size_rows=1,2 –use_node3=0

Another example:

python plot_op_tree_ensemble_optim.py

--n_trees=100 --n_features=10 --batch_size=10000 --max_depth=8 -s SHORT

import logging

import os

import timeit

from typing import Tuple

import numpy

import onnx

from onnx import ModelProto

from onnx.helper import make_graph, make_model

from onnx.reference import ReferenceEvaluator

from pandas import DataFrame

from sklearn.datasets import make_regression

from sklearn.ensemble import RandomForestRegressor

from skl2onnx import to_onnx

from onnxruntime import InferenceSession, SessionOptions

from onnx_array_api.plotting.text_plot import onnx_simple_text_plot

from onnx_extended.reference import CReferenceEvaluator

from onnx_extended.ortops.optim.cpu import get_ort_ext_libs

from onnx_extended.ortops.optim.optimize import (

change_onnx_operator_domain,

get_node_attribute,

optimize_model,

)

from onnx_extended.tools.onnx_nodes import multiply_tree

from onnx_extended.args import get_parsed_args

from onnx_extended.ext_test_case import unit_test_going

from onnx_extended.plotting.benchmark import hhistograms

logging.getLogger("matplotlib.font_manager").setLevel(logging.ERROR)

script_args = get_parsed_args(

"plot_op_tree_ensemble_optim",

description=__doc__,

scenarios={

"SHORT": "short optimization (default)",

"LONG": "test more options",

"CUSTOM": "use values specified by the command line",

},

n_features=(2 if unit_test_going() else 5, "number of features to generate"),

n_trees=(3 if unit_test_going() else 10, "number of trees to train"),

max_depth=(2 if unit_test_going() else 5, "max_depth"),

batch_size=(1000 if unit_test_going() else 10000, "batch size"),

parallel_tree=("80,160,40", "values to try for parallel_tree"),

parallel_tree_N=("256,128,64", "values to try for parallel_tree_N"),

parallel_N=("100,50,25", "values to try for parallel_N"),

batch_size_tree=("2,4,8", "values to try for batch_size_tree"),

batch_size_rows=("2,4,8", "values to try for batch_size_rows"),

use_node3=("0,1", "values to try for use_node3"),

expose="",

n_jobs=("-1", "number of jobs to train the RandomForestRegressor"),

)

Training a model¶

def train_model(

batch_size: int, n_features: int, n_trees: int, max_depth: int

) -> Tuple[str, numpy.ndarray, numpy.ndarray]:

filename = f"plot_op_tree_ensemble_optim-f{n_features}-{n_trees}-d{max_depth}.onnx"

if not os.path.exists(filename):

X, y = make_regression(

batch_size + max(batch_size, 2 ** (max_depth + 1)),

n_features=n_features,

n_targets=1,

)

print(f"Training to get {filename!r} with X.shape={X.shape}")

X, y = X.astype(numpy.float32), y.astype(numpy.float32)

# To be faster, we train only 1 tree.

model = RandomForestRegressor(

1, max_depth=max_depth, verbose=2, n_jobs=int(script_args.n_jobs)

)

model.fit(X[:-batch_size], y[:-batch_size])

onx = to_onnx(model, X[:1], target_opset={"": 18, "ai.onnx.ml": 3})

# And wd multiply the trees.

node = multiply_tree(onx.graph.node[0], n_trees)

onx = make_model(

make_graph([node], onx.graph.name, onx.graph.input, onx.graph.output),

domain=onx.domain,

opset_imports=onx.opset_import,

ir_version=onx.ir_version,

)

with open(filename, "wb") as f:

f.write(onx.SerializeToString())

else:

X, y = make_regression(batch_size, n_features=n_features, n_targets=1)

X, y = X.astype(numpy.float32), y.astype(numpy.float32)

Xb, yb = X[-batch_size:].copy(), y[-batch_size:].copy()

return filename, Xb, yb

batch_size = script_args.batch_size

n_features = script_args.n_features

n_trees = script_args.n_trees

max_depth = script_args.max_depth

print(f"batch_size={batch_size}")

print(f"n_features={n_features}")

print(f"n_trees={n_trees}")

print(f"max_depth={max_depth}")

batch_size=10000

n_features=5

n_trees=10

max_depth=5

training

filename, Xb, yb = train_model(batch_size, n_features, n_trees, max_depth)

print(f"Xb.shape={Xb.shape}")

print(f"yb.shape={yb.shape}")

Training to get 'plot_op_tree_ensemble_optim-f5-10-d5.onnx' with X.shape=(20000, 5)

[Parallel(n_jobs=-1)]: Using backend ThreadingBackend with 20 concurrent workers.

building tree 1 of 1

Xb.shape=(10000, 5)

yb.shape=(10000,)

Rewrite the onnx file to use a different kernel¶

The custom kernel is mapped to a custom operator with the same name the attributes and domain = “onnx_extended.ortops.optim.cpu”. We call a function to do that replacement. First the current model.

opset: domain='ai.onnx.ml' version=1

opset: domain='' version=18

opset: domain='' version=18

input: name='X' type=dtype('float32') shape=['', 5]

TreeEnsembleRegressor(X, n_targets=1, nodes_falsenodeids=630:[32,17,10...62,0,0], nodes_featureids=630:[4,4,4...1,0,0], nodes_hitrates=630:[1.0,1.0...1.0,1.0], nodes_missing_value_tracks_true=630:[0,0,0...0,0,0], nodes_modes=630:[b'BRANCH_LEQ',b'BRANCH_LEQ'...b'LEAF',b'LEAF'], nodes_nodeids=630:[0,1,2...60,61,62], nodes_treeids=630:[0,0,0...9,9,9], nodes_truenodeids=630:[1,2,3...61,0,0], nodes_values=630:[-0.06802710890769958,-1.0575470924377441...0.0,0.0], post_transform=b'NONE', target_ids=320:[0,0,0...0,0,0], target_nodeids=320:[5,6,8...59,61,62], target_treeids=320:[0,0,0...9,9,9], target_weights=320:[-353.1763610839844,-263.4009094238281...168.70252990722656,218.80404663085938]) -> variable

output: name='variable' type=dtype('float32') shape=['', 1]

And then the modified model.

def transform_model(model, **kwargs):

onx = ModelProto()

onx.ParseFromString(model.SerializeToString())

att = get_node_attribute(onx.graph.node[0], "nodes_modes")

modes = ",".join([s.decode("ascii") for s in att.strings]).replace("BRANCH_", "")

return change_onnx_operator_domain(

onx,

op_type="TreeEnsembleRegressor",

op_domain="ai.onnx.ml",

new_op_domain="onnx_extended.ortops.optim.cpu",

nodes_modes=modes,

**kwargs,

)

print("Tranform model to add a custom node.")

onx_modified = transform_model(onx)

print(f"Save into {filename + 'modified.onnx'!r}.")

with open(filename + "modified.onnx", "wb") as f:

f.write(onx_modified.SerializeToString())

print("done.")

print(onnx_simple_text_plot(onx_modified))

Tranform model to add a custom node.

Save into 'plot_op_tree_ensemble_optim-f5-10-d5.onnxmodified.onnx'.

done.

opset: domain='ai.onnx.ml' version=1

opset: domain='' version=18

opset: domain='' version=18

opset: domain='onnx_extended.ortops.optim.cpu' version=1

input: name='X' type=dtype('float32') shape=['', 5]

TreeEnsembleRegressor[onnx_extended.ortops.optim.cpu](X, nodes_modes=b'LEQ,LEQ,LEQ,LEQ,LEQ,LEAF,LEAF,LEQ,LEAF...LEAF,LEAF', n_targets=1, nodes_falsenodeids=630:[32,17,10...62,0,0], nodes_featureids=630:[4,4,4...1,0,0], nodes_hitrates=630:[1.0,1.0...1.0,1.0], nodes_missing_value_tracks_true=630:[0,0,0...0,0,0], nodes_nodeids=630:[0,1,2...60,61,62], nodes_treeids=630:[0,0,0...9,9,9], nodes_truenodeids=630:[1,2,3...61,0,0], nodes_values=630:[-0.06802710890769958,-1.0575470924377441...0.0,0.0], post_transform=b'NONE', target_ids=320:[0,0,0...0,0,0], target_nodeids=320:[5,6,8...59,61,62], target_treeids=320:[0,0,0...9,9,9], target_weights=320:[-353.1763610839844,-263.4009094238281...168.70252990722656,218.80404663085938]) -> variable

output: name='variable' type=dtype('float32') shape=['', 1]

Comparing onnxruntime and the custom kernel¶

print(f"Loading {filename!r}")

sess_ort = InferenceSession(filename, providers=["CPUExecutionProvider"])

r = get_ort_ext_libs()

print(f"Creating SessionOptions with {r!r}")

opts = SessionOptions()

if r is not None:

opts.register_custom_ops_library(r[0])

print(f"Loading modified {filename!r}")

sess_cus = InferenceSession(

onx_modified.SerializeToString(), opts, providers=["CPUExecutionProvider"]

)

print(f"Running once with shape {Xb.shape}.")

base = sess_ort.run(None, {"X": Xb})[0]

print(f"Running modified with shape {Xb.shape}.")

got = sess_cus.run(None, {"X": Xb})[0]

print("done.")

Loading 'plot_op_tree_ensemble_optim-f5-10-d5.onnx'

Creating SessionOptions with ['~/github/onnx-extended/onnx_extended/ortops/optim/cpu/libortops_optim_cpu.so']

Loading modified 'plot_op_tree_ensemble_optim-f5-10-d5.onnx'

Running once with shape (10000, 5).

Running modified with shape (10000, 5).

done.

Discrepancies?

Discrepancies: max=2.389814142134128e-07, mean=1.4949314675050118e-08 (A=1021.58837890625)

Simple verification¶

Baseline with onnxruntime.

t1 = timeit.timeit(lambda: sess_ort.run(None, {"X": Xb}), number=50)

print(f"baseline: {t1}")

baseline: 0.11564644700047211

The custom implementation.

t2 = timeit.timeit(lambda: sess_cus.run(None, {"X": Xb}), number=50)

print(f"new time: {t2}")

new time: 0.499985831000231

The same implementation but ran from the onnx python backend.

ref = CReferenceEvaluator(filename)

ref.run(None, {"X": Xb})

t3 = timeit.timeit(lambda: ref.run(None, {"X": Xb}), number=50)

print(f"CReferenceEvaluator: {t3}")

CReferenceEvaluator: 0.15682909299903258

The python implementation but from the onnx python backend.

ReferenceEvaluator: 2.793613915999231 (only 5 times instead of 50)

Time for comparison¶

The custom kernel supports the same attributes as TreeEnsembleRegressor plus new ones to tune the parallelization. They can be seen in tree_ensemble.cc. Let’s try out many possibilities. The default values are the first ones.

if unit_test_going():

optim_params = dict(

parallel_tree=[40], # default is 80

parallel_tree_N=[128], # default is 128

parallel_N=[50, 25], # default is 50

batch_size_tree=[1], # default is 1

batch_size_rows=[1], # default is 1

use_node3=[0], # default is 0

)

elif script_args.scenario in (None, "SHORT"):

optim_params = dict(

parallel_tree=[80, 40], # default is 80

parallel_tree_N=[128, 64], # default is 128

parallel_N=[50, 25], # default is 50

batch_size_tree=[1], # default is 1

batch_size_rows=[1], # default is 1

use_node3=[0], # default is 0

)

elif script_args.scenario == "LONG":

optim_params = dict(

parallel_tree=[80, 160, 40],

parallel_tree_N=[256, 128, 64],

parallel_N=[100, 50, 25],

batch_size_tree=[1, 2, 4, 8],

batch_size_rows=[1, 2, 4, 8],

use_node3=[0, 1],

)

elif script_args.scenario == "CUSTOM":

optim_params = dict(

parallel_tree=[int(i) for i in script_args.parallel_tree.split(",")],

parallel_tree_N=[int(i) for i in script_args.parallel_tree_N.split(",")],

parallel_N=[int(i) for i in script_args.parallel_N.split(",")],

batch_size_tree=[int(i) for i in script_args.batch_size_tree.split(",")],

batch_size_rows=[int(i) for i in script_args.batch_size_rows.split(",")],

use_node3=[int(i) for i in script_args.use_node3.split(",")],

)

else:

raise ValueError(

f"Unknown scenario {script_args.scenario!r}, use --help to get them."

)

cmds = []

for att, value in optim_params.items():

cmds.append(f"--{att}={','.join(map(str, value))}")

print("Full list of optimization parameters:")

print(" ".join(cmds))

Full list of optimization parameters:

--parallel_tree=80,40 --parallel_tree_N=128,64 --parallel_N=50,25 --batch_size_tree=1 --batch_size_rows=1 --use_node3=0

Then the optimization.

def create_session(onx):

opts = SessionOptions()

r = get_ort_ext_libs()

if r is None:

raise RuntimeError("No custom implementation available.")

opts.register_custom_ops_library(r[0])

return InferenceSession(

onx.SerializeToString(), opts, providers=["CPUExecutionProvider"]

)

res = optimize_model(

onx,

feeds={"X": Xb},

transform=transform_model,

session=create_session,

baseline=lambda onx: InferenceSession(

onx.SerializeToString(), providers=["CPUExecutionProvider"]

),

params=optim_params,

verbose=True,

number=script_args.number,

repeat=script_args.repeat,

warmup=script_args.warmup,

sleep=script_args.sleep,

n_tries=script_args.tries,

)

0%| | 0/16 [00:00<?, ?it/s]

i=1/16 TRY=0 //tree=80 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0: 0%| | 0/16 [00:00<?, ?it/s]

i=1/16 TRY=0 //tree=80 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0: 6%|▋ | 1/16 [00:00<00:07, 1.96it/s]

i=2/16 TRY=0 //tree=80 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.18x: 6%|▋ | 1/16 [00:00<00:07, 1.96it/s]

i=2/16 TRY=0 //tree=80 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.18x: 12%|█▎ | 2/16 [00:00<00:04, 3.18it/s]

i=3/16 TRY=0 //tree=80 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 12%|█▎ | 2/16 [00:00<00:04, 3.18it/s]

i=3/16 TRY=0 //tree=80 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 19%|█▉ | 3/16 [00:00<00:03, 3.47it/s]

i=4/16 TRY=0 //tree=80 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 19%|█▉ | 3/16 [00:00<00:03, 3.47it/s]

i=4/16 TRY=0 //tree=80 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 25%|██▌ | 4/16 [00:01<00:02, 4.01it/s]

i=5/16 TRY=0 //tree=40 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 25%|██▌ | 4/16 [00:01<00:02, 4.01it/s]

i=5/16 TRY=0 //tree=40 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 31%|███▏ | 5/16 [00:01<00:03, 3.60it/s]

i=6/16 TRY=0 //tree=40 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 31%|███▏ | 5/16 [00:01<00:03, 3.60it/s]

i=6/16 TRY=0 //tree=40 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 38%|███▊ | 6/16 [00:01<00:02, 3.58it/s]

i=7/16 TRY=0 //tree=40 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 38%|███▊ | 6/16 [00:01<00:02, 3.58it/s]

i=7/16 TRY=0 //tree=40 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 44%|████▍ | 7/16 [00:01<00:02, 3.75it/s]

i=8/16 TRY=0 //tree=40 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 44%|████▍ | 7/16 [00:01<00:02, 3.75it/s]

i=8/16 TRY=0 //tree=40 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 50%|█████ | 8/16 [00:02<00:01, 4.01it/s]

i=9/16 TRY=1 //tree=80 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 50%|█████ | 8/16 [00:02<00:01, 4.01it/s]

i=9/16 TRY=1 //tree=80 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 56%|█████▋ | 9/16 [00:02<00:01, 3.63it/s]

i=10/16 TRY=1 //tree=80 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 56%|█████▋ | 9/16 [00:02<00:01, 3.63it/s]

i=10/16 TRY=1 //tree=80 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 62%|██████▎ | 10/16 [00:02<00:01, 3.88it/s]

i=11/16 TRY=1 //tree=80 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 62%|██████▎ | 10/16 [00:02<00:01, 3.88it/s]

i=11/16 TRY=1 //tree=80 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 69%|██████▉ | 11/16 [00:02<00:01, 4.20it/s]

i=12/16 TRY=1 //tree=80 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 69%|██████▉ | 11/16 [00:02<00:01, 4.20it/s]

i=12/16 TRY=1 //tree=80 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.56x: 75%|███████▌ | 12/16 [00:03<00:00, 4.50it/s]

i=13/16 TRY=1 //tree=40 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.57x: 75%|███████▌ | 12/16 [00:03<00:00, 4.50it/s]

i=13/16 TRY=1 //tree=40 //tree_N=128 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.57x: 81%|████████▏ | 13/16 [00:03<00:00, 4.86it/s]

i=14/16 TRY=1 //tree=40 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.65x: 81%|████████▏ | 13/16 [00:03<00:00, 4.86it/s]

i=14/16 TRY=1 //tree=40 //tree_N=128 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.65x: 88%|████████▊ | 14/16 [00:03<00:00, 5.16it/s]

i=15/16 TRY=1 //tree=40 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.65x: 88%|████████▊ | 14/16 [00:03<00:00, 5.16it/s]

i=15/16 TRY=1 //tree=40 //tree_N=64 //N=50 bs_tree=1 batch_size_rows=1 n3=0 ~=0.65x: 94%|█████████▍| 15/16 [00:03<00:00, 5.32it/s]

i=16/16 TRY=1 //tree=40 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.70x: 94%|█████████▍| 15/16 [00:03<00:00, 5.32it/s]

i=16/16 TRY=1 //tree=40 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.70x: 100%|██████████| 16/16 [00:03<00:00, 5.69it/s]

i=16/16 TRY=1 //tree=40 //tree_N=64 //N=25 bs_tree=1 batch_size_rows=1 n3=0 ~=0.70x: 100%|██████████| 16/16 [00:03<00:00, 4.23it/s]

And the results.

df = DataFrame(res)

df.to_csv("plot_op_tree_ensemble_optim.csv", index=False)

df.to_excel("plot_op_tree_ensemble_optim.xlsx", index=False)

print(df.columns)

print(df.head(5))

Index(['average', 'deviation', 'min_exec', 'max_exec', 'repeat', 'number',

'ttime', 'context_size', 'warmup_time', 'n_exp', 'n_exp_name',

'short_name', 'TRY', 'name', 'parallel_tree', 'parallel_tree_N',

'parallel_N', 'batch_size_tree', 'batch_size_rows', 'use_node3'],

dtype='str')

average deviation min_exec max_exec repeat number ttime context_size warmup_time ... short_name TRY name parallel_tree parallel_tree_N parallel_N batch_size_tree batch_size_rows use_node3

0 0.000354 0.000062 0.000262 0.000466 10 10 0.003535 64 0.001924 ... 0,baseline 0.0 baseline NaN NaN NaN NaN NaN NaN

1 0.001993 0.002270 0.000258 0.008499 10 10 0.019929 64 0.058638 ... 0,80,128,50,1,1,0 NaN 80,128,50,1,1,0 80.0 128.0 50.0 1.0 1.0 0.0

2 0.000629 0.000381 0.000297 0.001395 10 10 0.006285 64 0.003729 ... 0,80,128,25,1,1,0 NaN 80,128,25,1,1,0 80.0 128.0 25.0 1.0 1.0 0.0

3 0.001011 0.001050 0.000271 0.003968 10 10 0.010112 64 0.043027 ... 0,80,64,50,1,1,0 NaN 80,64,50,1,1,0 80.0 64.0 50.0 1.0 1.0 0.0

4 0.000764 0.000408 0.000298 0.001510 10 10 0.007641 64 0.002667 ... 0,80,64,25,1,1,0 NaN 80,64,25,1,1,0 80.0 64.0 25.0 1.0 1.0 0.0

[5 rows x 20 columns]

Sorting¶

small_df = df.drop(

[

"min_exec",

"max_exec",

"repeat",

"number",

"context_size",

"n_exp_name",

],

axis=1,

).sort_values("average")

print(small_df.head(n=10))

average deviation ttime warmup_time n_exp short_name TRY name parallel_tree parallel_tree_N parallel_N batch_size_tree batch_size_rows use_node3

17 0.000325 0.000027 0.003249 0.002055 0 1,baseline 1.0 baseline NaN NaN NaN NaN NaN NaN

16 0.000343 0.000156 0.003428 0.002574 15 1,40,64,25,1,1,0 NaN 40,64,25,1,1,0 40.0 64.0 25.0 1.0 1.0 0.0

0 0.000354 0.000062 0.003535 0.001924 0 0,baseline 0.0 baseline NaN NaN NaN NaN NaN NaN

15 0.000508 0.000281 0.005079 0.002439 14 1,40,64,50,1,1,0 NaN 40,64,50,1,1,0 40.0 64.0 50.0 1.0 1.0 0.0

13 0.000542 0.000282 0.005419 0.002062 12 1,40,128,50,1,1,0 NaN 40,128,50,1,1,0 40.0 128.0 50.0 1.0 1.0 0.0

14 0.000570 0.000240 0.005703 0.001852 13 1,40,128,25,1,1,0 NaN 40,128,25,1,1,0 40.0 128.0 25.0 1.0 1.0 0.0

12 0.000622 0.000621 0.006220 0.003057 11 1,80,64,25,1,1,0 NaN 80,64,25,1,1,0 80.0 64.0 25.0 1.0 1.0 0.0

2 0.000629 0.000381 0.006285 0.003729 1 0,80,128,25,1,1,0 NaN 80,128,25,1,1,0 80.0 128.0 25.0 1.0 1.0 0.0

11 0.000763 0.000580 0.007631 0.002873 10 1,80,64,50,1,1,0 NaN 80,64,50,1,1,0 80.0 64.0 50.0 1.0 1.0 0.0

4 0.000764 0.000408 0.007641 0.002667 3 0,80,64,25,1,1,0 NaN 80,64,25,1,1,0 80.0 64.0 25.0 1.0 1.0 0.0

Worst¶

print(small_df.tail(n=10))

average deviation ttime warmup_time n_exp short_name TRY name parallel_tree parallel_tree_N parallel_N batch_size_tree batch_size_rows use_node3

11 0.000763 0.000580 0.007631 0.002873 10 1,80,64,50,1,1,0 NaN 80,64,50,1,1,0 80.0 64.0 50.0 1.0 1.0 0.0

4 0.000764 0.000408 0.007641 0.002667 3 0,80,64,25,1,1,0 NaN 80,64,25,1,1,0 80.0 64.0 25.0 1.0 1.0 0.0

8 0.000992 0.000444 0.009919 0.002969 7 0,40,64,25,1,1,0 NaN 40,64,25,1,1,0 40.0 64.0 25.0 1.0 1.0 0.0

3 0.001011 0.001050 0.010112 0.043027 2 0,80,64,50,1,1,0 NaN 80,64,50,1,1,0 80.0 64.0 50.0 1.0 1.0 0.0

10 0.001056 0.000739 0.010563 0.003858 9 1,80,128,25,1,1,0 NaN 80,128,25,1,1,0 80.0 128.0 25.0 1.0 1.0 0.0

7 0.001241 0.000703 0.012414 0.004669 6 0,40,64,50,1,1,0 NaN 40,64,50,1,1,0 40.0 64.0 50.0 1.0 1.0 0.0

6 0.001633 0.000878 0.016326 0.002083 5 0,40,128,25,1,1,0 NaN 40,128,25,1,1,0 40.0 128.0 25.0 1.0 1.0 0.0

1 0.001993 0.002270 0.019929 0.058638 0 0,80,128,50,1,1,0 NaN 80,128,50,1,1,0 80.0 128.0 50.0 1.0 1.0 0.0

5 0.002074 0.001579 0.020744 0.002373 4 0,40,128,50,1,1,0 NaN 40,128,50,1,1,0 40.0 128.0 50.0 1.0 1.0 0.0

9 0.002169 0.002314 0.021687 0.002561 8 1,80,128,50,1,1,0 NaN 80,128,50,1,1,0 80.0 128.0 50.0 1.0 1.0 0.0

Plot¶

skeys = ",".join(optim_params.keys())

title = f"TreeEnsemble tuning, n_tries={script_args.tries}\n{skeys}\nlower is better"

ax = hhistograms(df, title=title, keys=("name",))

fig = ax.get_figure()

fig.savefig("plot_op_tree_ensemble_optim.png")

Total running time of the script: (0 minutes 8.536 seconds)