Note

Go to the end to download the full example code

Measuring CPU performance#

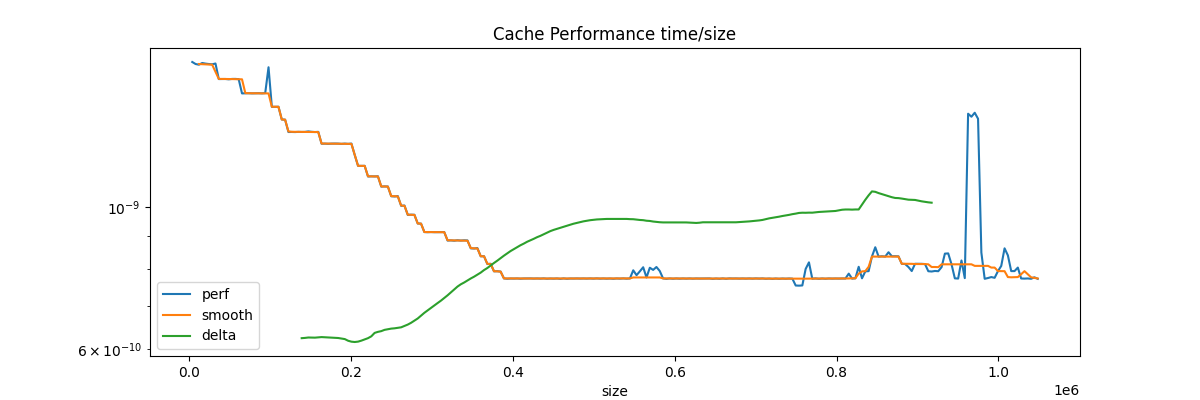

Processor caches must be taken into account when writing an algorithm, see Memory part 2: CPU caches from Ulrich Drepper.

Cache Performance#

from tqdm import tqdm

import matplotlib.pyplot as plt

from pyquickhelper.loghelper import run_cmd

from pandas import DataFrame, concat

from onnx_extended.ext_test_case import unit_test_going

from onnx_extended.validation.cpu._validation import (

benchmark_cache,

benchmark_cache_tree,

)

obs = []

step = 2**12

for i in tqdm(range(step, 2**20 + step, step)):

res = min(

[

benchmark_cache(i, False),

benchmark_cache(i, False),

benchmark_cache(i, False),

]

)

if res < 0:

# overflow

continue

obs.append(dict(size=i, perf=res))

df = DataFrame(obs)

mean = df.perf.mean()

lag = 32

for i in range(2, df.shape[0]):

df.loc[i, "smooth"] = df.loc[i - 8 : i + 8, "perf"].median()

if i > lag and i < df.shape[0] - lag:

df.loc[i, "delta"] = (

mean

+ df.loc[i : i + lag, "perf"].mean()

- df.loc[i - lag + 1 : i + 1, "perf"]

).mean()

0%| | 0/256 [00:00<?, ?it/s]

51%|##### | 130/256 [00:00<00:00, 1291.94it/s]

100%|##########| 256/256 [00:00<00:00, 712.30it/s]

Cache size estimator#

cache_size_index = int(df.delta.argmax())

cache_size = df.loc[cache_size_index, "size"] * 2

print(f"L2 cache size estimation is {cache_size / 2 ** 20:1.3f} Mb.")

L2 cache size estimation is 1.609 Mb.

Verification#

try:

out, err = run_cmd("lscpu", wait=True)

print("\n".join(_ for _ in out.split("\n") if "cache:" in _))

except Exception as e:

print(f"failed due to {e}")

df = df.set_index("size")

fig, ax = plt.subplots(1, 1, figsize=(12, 4))

df.plot(ax=ax, title="Cache Performance time/size", logy=True)

fig.savefig("plot_benchmark_cpu_array.png")

L1d cache: 128 KiB (4 instances)

L1i cache: 128 KiB (4 instances)

L2 cache: 1 MiB (4 instances)

L3 cache: 8 MiB (1 instance)

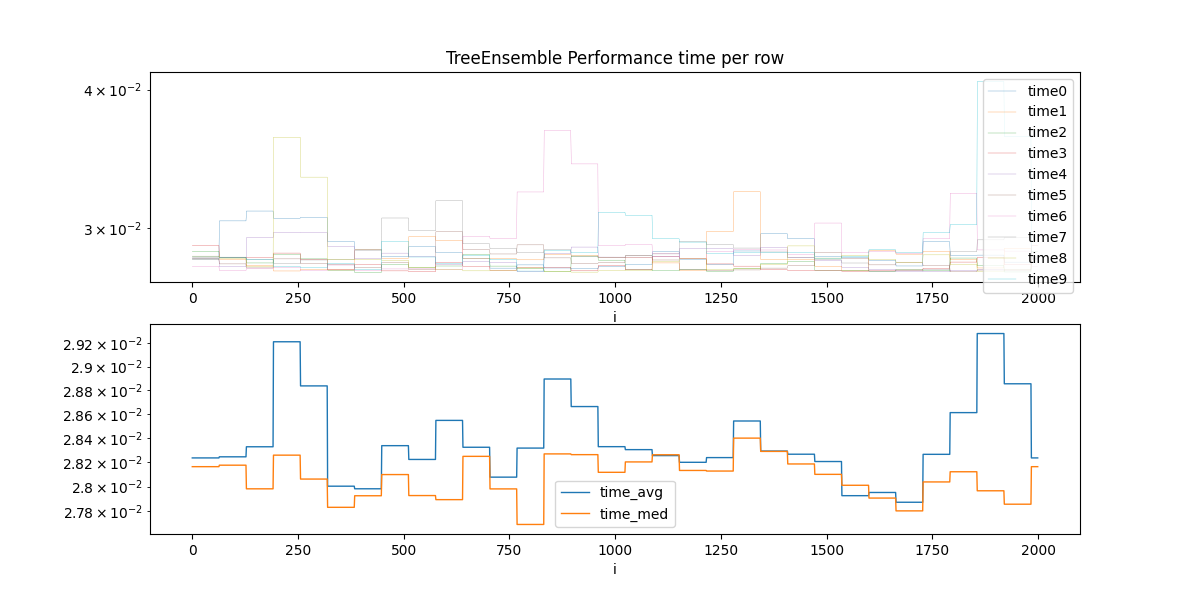

TreeEnsemble Performance#

We simulate the computation of a TreeEnsemble of 50 features, 100 trees and depth of 10 (so \(2^10\) nodes.)

dfs = []

cols = []

drop = []

for n in tqdm(range(10)):

res = benchmark_cache_tree(

n_rows=2000,

n_features=50,

n_trees=100,

tree_size=1024,

max_depth=10,

search_step=64,

)

res = [[max(r.row, i), r.time] for i, r in enumerate(res)]

df = DataFrame(res)

df.columns = [f"i{n}", f"time{n}"]

dfs.append(df)

cols.append(df.columns[-1])

drop.append(df.columns[0])

if unit_test_going() and len(dfs) >= 2:

break

df = concat(dfs, axis=1).reset_index(drop=True)

df["i"] = df["i0"]

df = df.drop(drop, axis=1)

df["time_avg"] = df[cols].mean(axis=1)

df["time_med"] = df[cols].median(axis=1)

df.head()

0%| | 0/10 [00:00<?, ?it/s]

10%|# | 1/10 [00:00<00:08, 1.12it/s]

20%|## | 2/10 [00:01<00:07, 1.13it/s]

30%|### | 3/10 [00:02<00:06, 1.14it/s]

40%|#### | 4/10 [00:03<00:05, 1.15it/s]

50%|##### | 5/10 [00:04<00:04, 1.14it/s]

60%|###### | 6/10 [00:05<00:03, 1.15it/s]

70%|####### | 7/10 [00:06<00:02, 1.13it/s]

80%|######## | 8/10 [00:07<00:01, 1.13it/s]

90%|######### | 9/10 [00:07<00:00, 1.13it/s]

100%|##########| 10/10 [00:08<00:00, 1.12it/s]

100%|##########| 10/10 [00:08<00:00, 1.13it/s]

Estimation#

Optimal batch size is among:

i time_med time_avg

0 768 0.027691 0.028317

1 1664 0.027801 0.027871

2 320 0.027830 0.028003

3 1920 0.027855 0.028854

4 576 0.027893 0.028547

5 1600 0.027905 0.027951

6 384 0.027924 0.027981

7 512 0.027926 0.028223

8 1856 0.027965 0.029282

9 704 0.027980 0.028077

One possible estimation

Estimation: 1048.3608045617461

Plots.

cols_time = ["time_avg", "time_med"]

fig, ax = plt.subplots(2, 1, figsize=(12, 6))

df.set_index("i").drop(cols_time, axis=1).plot(

ax=ax[0], title="TreeEnsemble Performance time per row", logy=True, linewidth=0.2

)

df.set_index("i")[cols_time].plot(ax=ax[1], linewidth=1.0, logy=True)

fig.savefig("plot_bench_cpu.png")

Total running time of the script: ( 0 minutes 10.094 seconds)