Note

Go to the end to download the full example code.

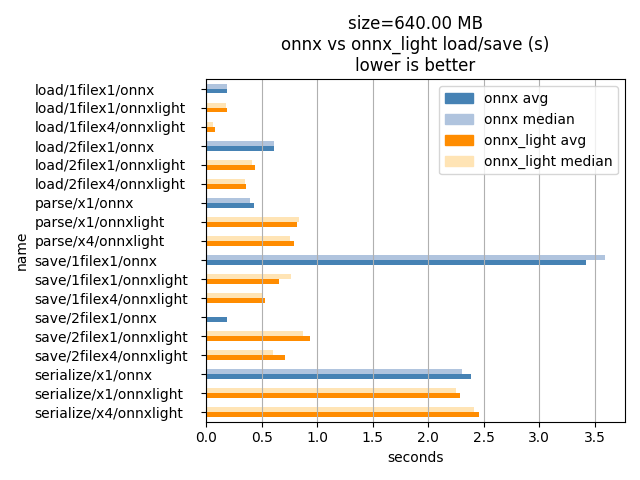

Measures loading and saving time for an ONNX model#

This script builds a small ONNX model and benchmarks the time to load

and save it using onnx and onnx_light.onnx.

It only compares the Python bindings; the model structure is identical

in both cases.

The onnx_light.onnx implementation does not depend on protobuf and

therefore avoids the overhead of the protobuf serialization layer.

It also supports parallel loading of tensor weights through the

parallel keyword.

import os

import time

import tempfile

import numpy as np

import pandas

import onnx

import onnx.helper as oh

import onnx.numpy_helper as onh

import onnx_light.onnx as onnxl

Build a small synthetic ONNX model#

We create a model with several Gemm nodes and large initializers so

that the load/save times are measurable.

N_INIT = 20

DIM = 256

def make_model(n_init: int = N_INIT, dim: int = DIM) -> onnx.ModelProto:

"""Returns a synthetic ONNX model with *n_init* Gemm initializers of size *dim*."""

initializers = []

nodes = []

inputs = [oh.make_tensor_value_info("X", onnx.TensorProto.FLOAT, [None, dim])]

prev = "X"

for i in range(n_init):

weight_name = f"W{i}"

out_name = f"Y{i}"

w = np.random.randn(dim, dim).astype(np.float32)

initializers.append(onh.from_array(w, name=weight_name))

nodes.append(oh.make_node("Gemm", [prev, weight_name], [out_name], transB=1))

prev = out_name

outputs = [oh.make_tensor_value_info(prev, onnx.TensorProto.FLOAT, [None, dim])]

graph = oh.make_graph(nodes, "bench_graph", inputs, outputs, initializer=initializers)

model = oh.make_model(graph, opset_imports=[oh.make_opsetid("", 18)], ir_version=9)

return model

model = make_model()

size_bytes = model.ByteSize()

print(f"Model size: {size_bytes / 2 ** 20:.3f} MB")

Model size: 5.001 MB

Write the model to a temporary file#

tmp_dir = tempfile.mkdtemp()

onnx_path = os.path.join(tmp_dir, "bench.onnx")

onnx.save(model, onnx_path)

file_size = os.path.getsize(onnx_path)

print(f"File size : {file_size / 2 ** 20:.3f} MB")

File size : 5.001 MB

Benchmark helper#

def measure(name: str, fn, n: int = 5) -> dict:

"""Runs *fn* *n* times and records timing statistics."""

times = []

for _ in range(n):

t0 = time.perf_counter()

fn()

times.append(time.perf_counter() - t0)

return {"name": name, "avg": float(np.mean(times)), "min": float(np.min(times))}

data = []

Load with onnx#

load/onnx avg=1.6 ms

Load with onnx_light.onnx#

load/onnxlight avg=0.9 ms

Load with onnx_light.onnx using parallel tensor loading#

load/onnxlight/x4 avg=5.8 ms

Save with onnx#

save/onnx avg=17.9 ms

Save with onnx_light.onnx#

save/onnxlight avg=15.2 ms

Save with onnx_light.onnx using external data#

out_ext = os.path.join(tmp_dir, "out_ext.onnx")

out_ext_data = out_ext + ".data"

data.append(

measure("save/onnxlight/ext", lambda: onnxl.save(onxl, out_ext, location=out_ext_data))

)

print(f"save/onnxlight/ext avg={data[-1]['avg'] * 1e3:.1f} ms")

save/onnxlight/ext avg=13.9 ms

Results#

df = pandas.DataFrame(data).set_index("name")

print(df)

avg min

name

load/onnx 0.001604 0.001377

load/onnxlight 0.000916 0.000699

load/onnxlight/x4 0.005784 0.001183

save/onnx 0.017938 0.010339

save/onnxlight 0.015176 0.002952

save/onnxlight/ext 0.013878 0.002989

Plot the results.

ax = df[["avg"]].plot.barh(

title=f"size={file_size / 2 ** 20:.2f} MB\nonnx vs onnx_light load/save (s)\nlower is better",

xlabel="seconds",

)

ax.figure.tight_layout()

ax.figure.savefig("plot_onnx_time.png")

Total running time of the script: (0 minutes 1.551 seconds)